Your core electronic health record (EHR) systems hold a decade’s worth of patient encounters. Your auxiliary platforms house claims and lab results going back even further. Yet, your data warehouse likely remains starved of both – because moving clinical data from where it is captured to where it can be analyzed is not a configuration problem. It is an architectural one.

This is the reality for most health systems today. EHRs were designed as “systems of record” to facilitate documentation at the point of care, not as “systems of insight” for analytics. The result? Organizations with massive digital footprints still cannot answer basic population health questions without weeks of manual data extraction, brittle interface work, or API calls that behave inconsistently across different legacy environments.

The data exists. However, research from the HIMSS Global Health Conference reveals that 57% of physicians identify interoperability as their primary obstacle in maximizing the value of health information technology. Transforming raw, proprietary records into a stream that is clean, standardized, and HIPAA-defensible is where most healthcare data engineering efforts break down.

This article explains exactly why that happens and what a properly designed healthcare data pipeline looks like.

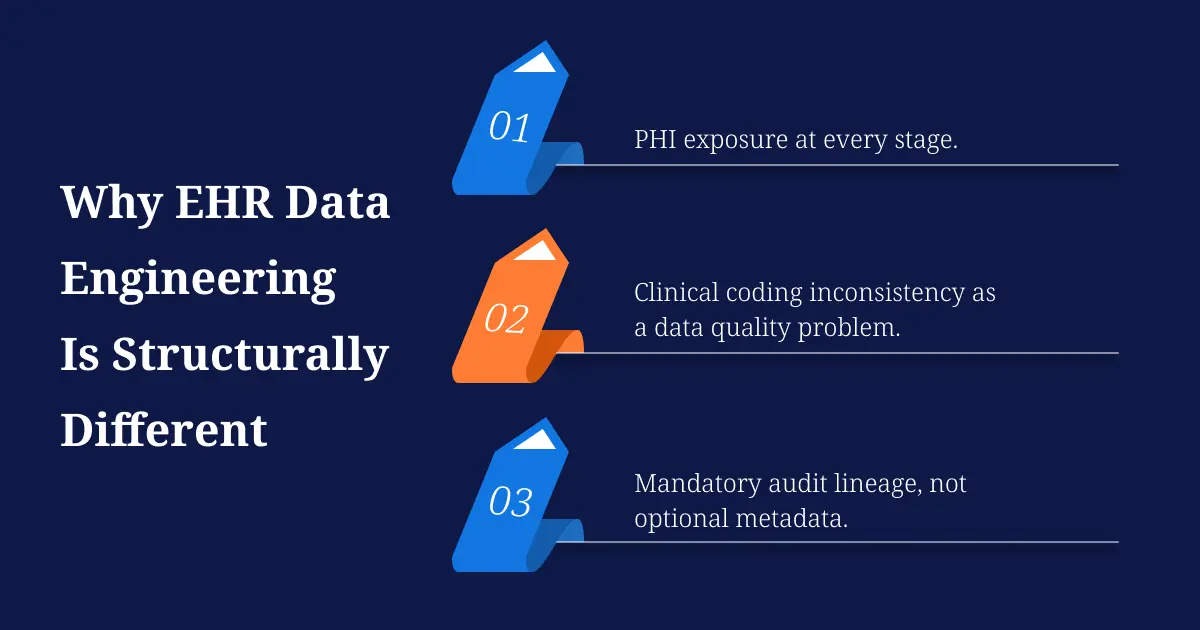

Why EHR Data Engineering Is Structurally Different

Standard data engineering solves for schema drift, pipeline latency, and system reliability. Healthcare data engineering inherits all of that and adds three layers that have no equivalent in most other industries.

PHI exposure at every stage. In a typical SaaS data pipeline, sensitive fields are a small subset of the total data. In a clinical pipeline, nearly every field is a potential HIPAA identifier: patient name, date of birth, admission date, diagnosis code, and provider ID. An EHR data pipeline design that treats PHI handling as a transformation step rather than an architectural constraint will produce audit failures before it ever reaches production. HIPAA-compliant data engineering means encryption in transit and at rest, fine-grained role-based access controls, automated audit logging, and VPC-isolated compute, all engineered at the infrastructure layer, not the application layer.

Clinical coding inconsistency as a data quality problem. Clinical data routinely arrives with incomplete, outdated, or duplicate entries, with inconsistently applied terminologies that create ambiguity across systems. Labs arrive coded in LOINC, but not always with the same LOINC version. Diagnoses reference ICD-10 codes, but many clinicians enter free-text descriptions that bypass structured coding entirely. Medications reference RxNorm in some systems and NDC codes in others. Before any clinical data analytics workload can run reliably, a normalization layer must resolve these conflicts as a deterministic pipeline step, not a manual remediation task.

Mandatory audit lineage, not optional metadata. In GxP-regulated environments used in life sciences and pharma, 21 CFR Part 11 requires validated, traceable data lineage for every transformation applied to a dataset. HIPAA adds access logging requirements. These are not post-processing tasks. A pipeline without automated lineage tracking built in is not audit-ready, regardless of how well the transformation logic performs.

The Dual-Standard Problem: HL7 v2 and FHIR Running Side by Side

One of the most misunderstood aspects of EHR data integration is that FHIR R4 did not replace HL7 v2. In most production health systems, both run simultaneously and serve different functions.

HL7 v2 message feeds handle real-time clinical events: ADT (admission, discharge, transfer) notifications, lab results via ORU messages, and clinical documentation via MDM messages. These feeds have been running in hospitals for decades and are deeply embedded in clinical workflows. FHIR R4 APIs serve newer use cases: patient-facing app access, payer-to-provider data exchange, and more recent analytics integrations. Hospitals will still have HL7 v2 interfaces and batch reports for some time, and a well-designed pipeline architecture acknowledges this. Think of HL7 v2 as a reliable ‘telegraph’ for real-time events and FHIR as a modern ‘webpage’ for data exchange; a robust pipeline must speak both languages simultaneously.

The engineering challenge this creates: HL7 v2 messages are event-driven and arrive as positional pipe-delimited text. FHIR R4 resources are RESTful JSON objects structured around clinical resource types. Parsing, validating, and routing both into the same raw data zone requires separate ingestion logic, but a unified schema downstream. Organizations that build separate pipelines for each create a massive reconciliation risk, frequently resulting in fragmented patient identities where a single clinical encounter appears as two disconnected records.

The practical solution is an event-streaming layer, typically Kafka, that accepts both HL7 v2 feeds and FHIR API payloads as distinct topics, normalizes them through separate parser services, and lands both into a common staging zone before any transformation logic runs. This is how you handle FHIR and HL7 simultaneously without breaking existing clinical interfaces.

The Clinical Data Normalization Problem

Raw EHR data extracted from Epic or Cerner cannot go directly into a data warehouse and be used for analytics. It needs a normalization layer that most EHR-to-analytics migration projects underestimate.

As the clinical research paradigm shifts toward data centricity, the need for quality control in the secondary use of EHR data has become increasingly critical, with standardized quality control methods and automation identified as necessary foundations for reliable secondary use.

In practice, this means three specific engineering problems:

Terminology mapping. Labs extracted from one Epic instance may use LOINC 2.69. Labs extracted from a Cerner instance used by an affiliated clinic may reference local codes with no LOINC equivalent. Before these datasets can be queried together, every coded field needs a deterministic mapping applied in the transformation layer. Attempting to resolve this at the analytics layer, in SQL queries or BI tools, produces inconsistency at scale.

Free-text extraction. A significant volume of clinically meaningful information lives in progress notes, discharge summaries, and radiology reads. None of this enters a structured warehouse field without an NLP preprocessing step. Clinical NLP is not general-purpose NLP: negation detection (“no evidence of pneumonia”), temporal reasoning (“history of”), and clinical abbreviation resolution require models trained on medical corpora, not general text.

Deduplication across systems. The same patient exists across emergency department records, outpatient visits, lab systems, pharmacy databases, and insurance claims, often represented differently in each system. A Master Patient Index is not optional in a multi-EHR environment. Without patient identity resolution upstream, every downstream model and report produces results that cannot be trusted.

What a Production-Ready EHR Data Pipeline Architecture Looks Like

A functioning EHR data engineering solution addresses ingestion, normalization, compliance, and analytics readiness as a connected pipeline, not sequential phases handed off between teams.

Ingestion layer

Kafka handles both real-time HL7 v2 event streams and FHIR R4 API pulls as separate topics landing in a raw zone. No transformation happens here. The raw zone preserves source fidelity for audit and reprocessing.

Transformation and normalization layer

Spark handles distributed transformation at scale. This is where LOINC mappings, RxNorm normalization, ICD-10 validation, and free-text NLP extraction run as automated pipeline steps. Records with unresolvable codes are quarantined for review, not silently passed downstream as nulls.

Compliance layer

PHI tokenization and de-identification run as pipeline-level processes before data reaches the analytics zone. Automated lineage tracking generates audit logs as a byproduct of transformation, not as a separate process. This keeps the pipeline HIPAA-compliant and GxP-ready without slowing transformation throughput.

Analytics and serving layer

Research comparing clinical data warehouses, data lakes, and data lakehouses found that the lakehouse architecture best balances robust data governance with the flexibility required for advanced analytics workloads. This ‘Lakehouse’ approach ensures that your data is no longer stuck in a ‘read-only’ warehouse. By balancing governance with flexibility, systems like Databricks or Snowflake allow you to run standard financial reports and advanced clinical AI models simultaneously from the same source of truth, eliminating the need for redundant, costly data silos.

The Intuceo Approach to Healthcare Data Engineering

Intuceo’s healthcare data engineering practice is built on one principle: compliance and performance are not tradeoffs in clinical data pipelines. They are both requirements, and the architecture must satisfy both from the start.

Intuceo engineers HIPAA-validated, FISMA-compliant data environments on Azure and AWS that handle real-time HL7 and FHIR orchestration at production scale. Every pipeline is built with automated audit logging, PHI tokenization at the infrastructure layer, and real-time data quality monitoring to prevent normalization failures from reaching model training or reporting. The firm’s Explainable AI (XAI) layer ensures that clinical ML outputs carry the evidence trail required for regulatory review, not just a prediction score.

Intuceo has built production clinical data platforms for Florida Blue, GuideWell Health, and UF Health, moving raw EHR extracts through normalization, compliance, and into analytics-ready “Gold Record” status. The output is a single, unified patient record that consolidates EHR data, claims, and social determinants of health into one source of truth, ready for population health queries, predictive modeling, and HEDIS or STAR measure reporting.

Ready to move from data-rich to insight-rich?

Whether you’re navigating payer-side HEDIS optimization, provider-side denial management, or building a population health program for a value-based care contract, our healthcare analytics team is ready to design your roadmap.

Frequently Asked Questions

1.Why does EHR data extraction keep breaking when the source system updates?

HL7 v2 interfaces are brittle because they depend on positional field parsing. When a source EHR vendor changes a message segment, downstream parsers fail silently or produce incorrect mappings. The fix is schema-versioned parser logic with automated regression testing on interface updates, not manual fixes each time a vendor releases a patch.

2.How do I design a data pipeline for EHR data that is both HIPAA-compliant and performant?

PHI de-identification and tokenization need to run at the pipeline level, within a HIPAA-validated infrastructure environment, before data reaches the analytics zone. Compliance overhead belongs on the infrastructure layer, not inside transformation logic. When built this way, compliance does not add latency to the data path.

3.How do I clean and standardize messy clinical data for analytics and machine learning?

Apply terminology mappings (LOINC, RxNorm, ICD-10/SNOMED-CT) as deterministic transformation steps inside the pipeline, before data reaches the warehouse. Quarantine records with unmapped or conflicting codes for domain expert review. Any ML model trained on unnormalized clinical codes will degrade as source system coding practices change over time.

4.What are the most common mistakes in healthcare data warehousing?

Three patterns repeat consistently: loading raw EHR data without clinical coding normalization, treating PHI handling as a query-layer concern rather than a pipeline-level design decision, and building separate infrastructure for real-time HL7 feeds and batch analytics instead of a unified lakehouse that serves both.

5. How do I move EHR data from legacy systems to the cloud without disrupting clinical operations?

The safest approach is a parallel-run strategy: stand up the new cloud pipeline to ingest and process data alongside the legacy system before cutover. This validates data fidelity and normalization accuracy without creating a dependency on the new pipeline until it is production-proven. Cutover becomes a routing switch, not a migration event.

Artificial Intelligence

Artificial Intelligence DataOps & Engineering

DataOps & Engineering Digital Engineering

Digital Engineering Enterprise Transformation

Enterprise Transformation Healthcare

Healthcare Advanced Manufacturing

Advanced Manufacturing Supply Chain & Transportation

Supply Chain & Transportation Engineering & Auto

Engineering & Auto Public Sector & Strategic Markets

Public Sector & Strategic Markets iPDLC (Lifecycle)

iPDLC (Lifecycle) iTMS

iTMS Modular AI

Modular AI Blogs

Blogs Technical Briefs

Technical Briefs Use Cases

Use Cases